At Nirvana, we’re on a mission to modernize the commercial trucking insurance industry using data and technology. When we talk about legacy systems in this space, we aren't just complaining about outdated software; we’re dealing with absolute mountains of paper, endless PDF documents, and painstaking manual reviews of claims and evidence.

Naturally, when Large Language Models (LLMs) gained traction, we saw a massive opportunity. We could automate these heavy, manual processes and free up our experts to focus on complex risk assessments and investigations. With a green light from leadership, our engineering teams dove into GenAI, spinning up Proofs of Concept (PoCs) and relying on "vibe testing" to gauge how well they worked.

But here’s the reality check: in the high-stakes world of commercial insurance, "vibes" simply aren't enough. If our model hallucinates a claim investigation or misreads a risk factor, we’re looking at severe financial liability, ethical breaches, and serious reputational damage. We quickly realized that to graduate from a cool demo to a production-grade system, we needed a rigorous, scalable evaluation engine.

The catch? We were staring down a classic "Cold Start" problem: we had absolutely no Golden Dataset (Ground Truth).

Here is exactly how our team moved beyond the vibe check to build a reliable, bootstrapped evaluation architecture for one of our most complex products.

Why "Vibe Checks" Don't Scale

"Vibe testing" is exactly what it sounds like: developers manually spot-checking a few outputs to get a subjective feel for how the model is doing. It’s great for initial intuition, but as a strategy for production systems, it is wholly inadequate. It masks two massive engineering risks:

- The Flakiness Problem (The Illusion of Accuracy)

LLMs are fundamentally stochastic. Even if you use the exact same prompt and model setup, it might give you the right answer 80% of the time, and fail the other 20% due to non-determinism. If you manually vibe-test 10 similar outputs, statistically, there is a ~38% chance you will see zero or only one error. This creates a dangerous illusion that your system is 90-100% accurate, even though real production users will be hitting that 20% failure rate. Without statistical measurement at scale, you are flying blind. - The "Whack-a-Mole" Trap

The second danger of ad-hoc testing is the nightmare of regression. Say you find a specific edge case where the model fails. You tweak the prompt to fix that local error. Without a comprehensive test suite, that local fix often causes unexpected regressions somewhere else. You fix the logic for "Trucking Liability," but you unknowingly break "Cargo Insurance". You whack one mole, and another pops up. Unless you have a global evaluation system measuring the impact of every change against your whole dataset, progress is impossible to track.

Architecting for Evaluability

Because Generative AI is ultimately a subset of Machine Learning, we based our evaluation design heavily on statistical principles focused on bias mitigation and confidence. We broke this into three core pillars:

- The Output: Defining exactly what we are evaluating (e.g., categories vs. unstructured text notes).

- The Golden Dataset: The historical ground truth we measure the output against.

- Evaluation Metrics: The business-logic driven metrics we care about (like prioritizing Recall over Accuracy for fraud detection).

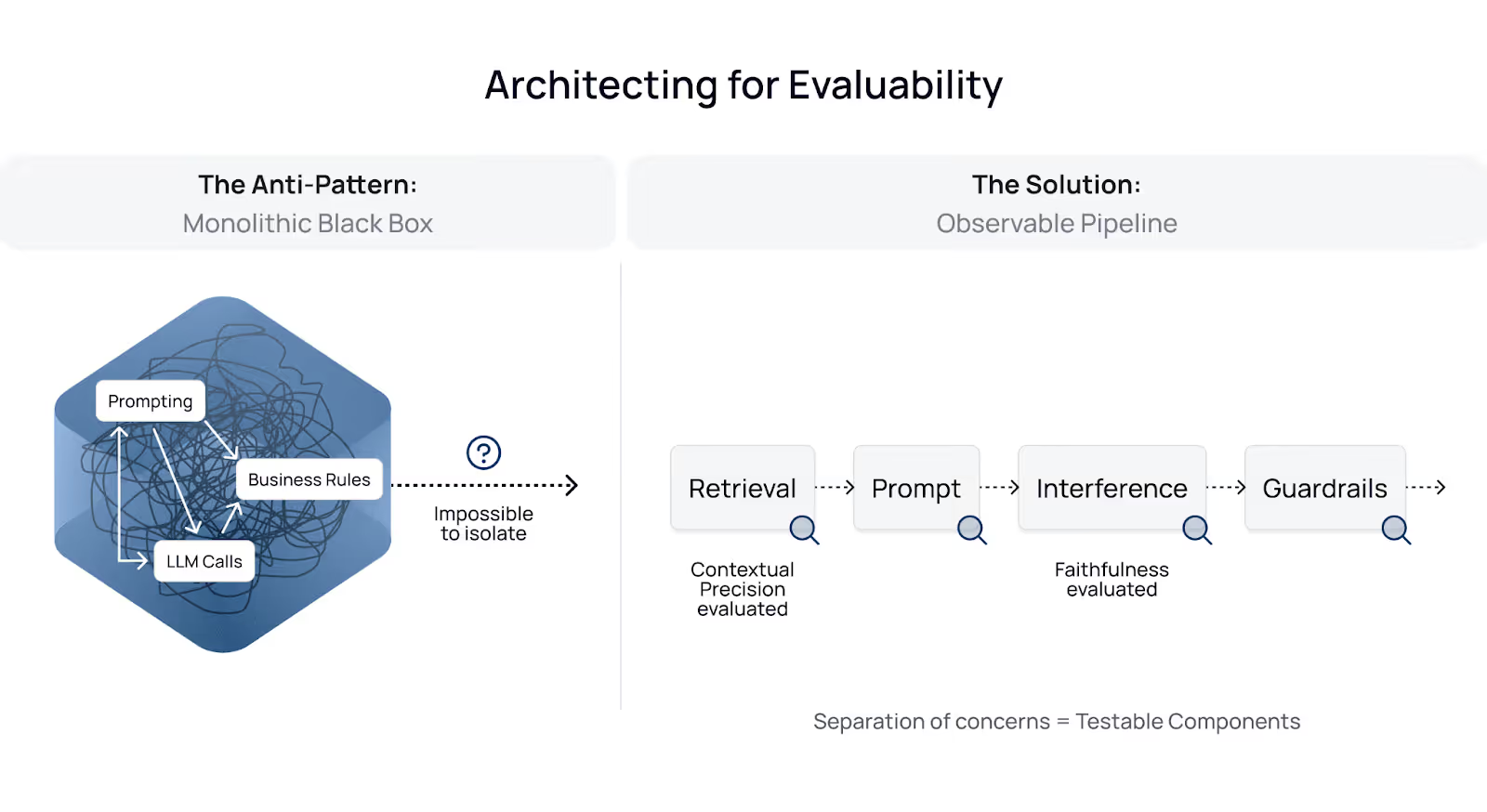

Before writing a single test, we had to look at our code architecture. Evaluation isn't just a script you run; it is an architectural choice. We identified a hierarchy of design patterns, ranging from "hard to evaluate" to "inherently testable".

- The Anti-Pattern: Monolithic Black Box. In our early builds, everything was tightly coupled. Prompting, business rules, and LLM calls were all mixed together in a single unit. This made isolation impossible. If an output was wrong, was it a bad prompt? Bad context retrieval? Or the model itself?

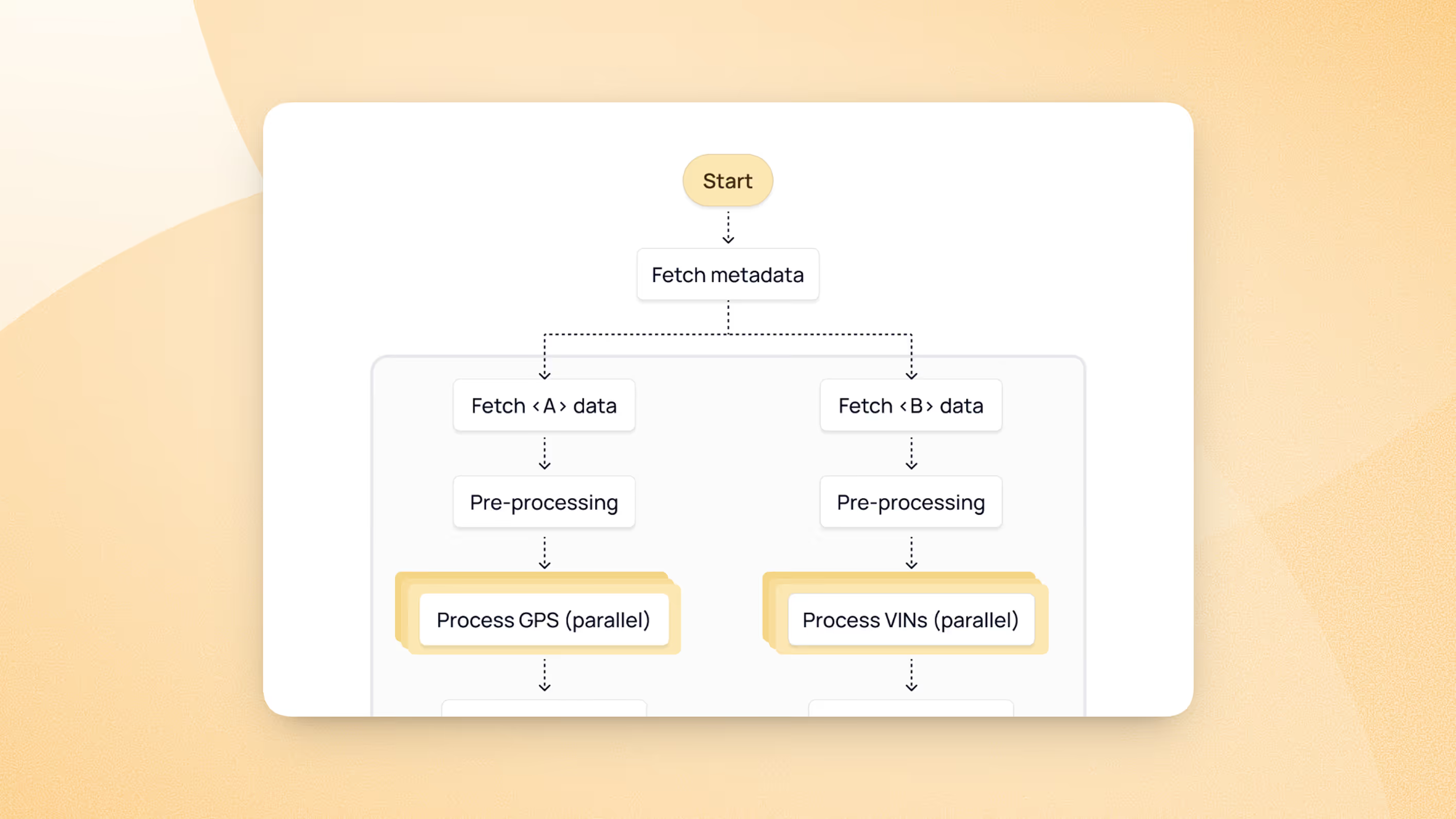

- The Solution: Observable Pipeline. We refactored our system into distinct, observable stages (Retrieval → Prompt → Inference → Guardrails).

This separation of concerns allowed us to test components individually. For example, in our RAG (Retrieval-Augmented Generation) workflows, we could measure Contextual Precision (did we find the right document?) separately from Faithfulness (did the LLM answer correctly based on that document?).

Case Study: Claim Coverage Determination

To illustrate our journey, let’s look at one of our most complex challenges: Claim Coverage Determination.

This was difficult because it lacked a clean golden dataset; historical data consisted of third-party notes that weren't easily consumable. Furthermore, the output wasn't a simple binary class (Covered/Not Covered). It was a conditional logic puzzle: Part A is covered, Part B is pending additional data, Part C is denied.

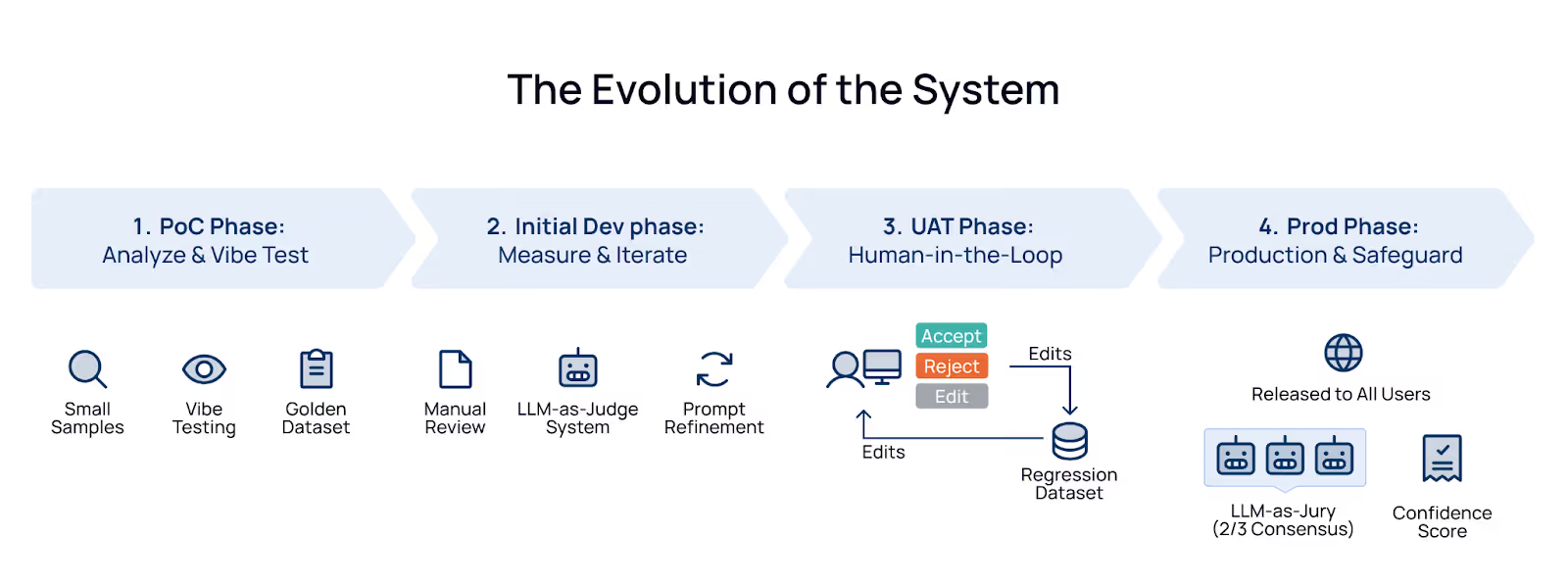

The Evolution of the System

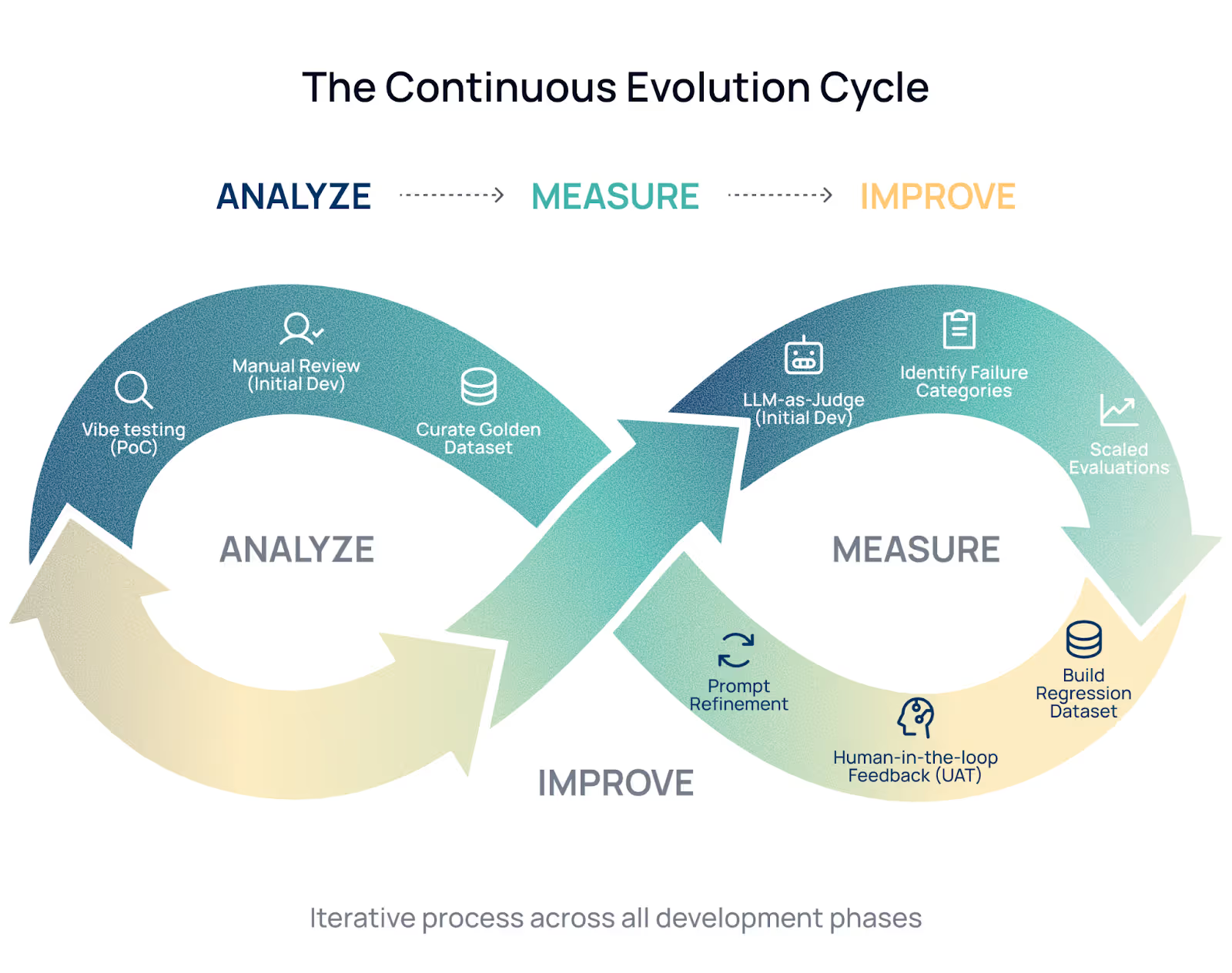

We phased our development to ensure we were building on solid ground, moving from "Analyze" to "Measure" to "Improve".

- PoC Phase: We started with simple vibe testing on small samples just to see if the idea was feasible. At the same time, we started manually curating a golden dataset.

- Initial Dev Phase: We manually (non-negotiable) reviewed 50–100 outputs to identify failure categories. We then built an LLM-as-Judge system to run scaled evaluations. By analyzing errors and refining the prompts, we iteratively improved the product.

- Result: We moved from ~70% to ~87% accuracy, giving us the confidence to proceed.

- UAT Phase: We introduced a "Human-in-the-Loop" step. Select claim adjusters were given the ability to accept, reject, or edit the AI's output. Every single edit became a precious data point. We used this data to improve the LLM-as-Judge and build a regression dataset.

- Result: We moved from ~87% to ~92% accuracy, giving us the confidence to productise.

- Prod Phase: We released the system to all users. We now use a hybrid of human feedback and an "LLM-as-Jury" approach (where 2 out of 3 models must agree) to assign confidence scores to outputs in real-time.

- Result: At production scale, this is expected to save ~80% of adjusters’ time on claim coverage analysis.

Deep Dive: Designing "LLM-as-Judge"

Because scaling manual review is impossible, we leaned heavily on the LLM-as-Judge pattern. The trickiest part is defining the rubric and actually evaluating the judge itself.

- Evaluation Without Ground Truth

When we lacked human-verified labels, we used a strong model to cross-validate the system's output against the source documents. We quickly learned that a generic "Pass/Fail" on the entire output was way too broad. Instead, we forced the judge to answer highly granular checks:

- Is the policy active?

- Is the nature of loss determined correctly?

- Are towing limits accurate?

By granularly grading these specific logic paths, we could identify exactly which part of our pipeline was underperforming. - Evaluation With Ground Truth (The "Diff" Analysis)

Once we had human-reviewed data, things changed. If a user accepted the output, we labeled it "Correct". If they edited it, we needed to know why. We designed the judge to analyze the semantic diff between the AI output and the human edit. Was the user fixing a factual error, making a stylistic tweak, or removing irrelevant info? This helped us separate "incorrect expectation errors" (user preference) from genuine "model errors" (hallucinations).

Tuning the Judge (Meta-Evaluation)

An LLM judge is still just an LLM—it can hallucinate too. To trust it, we treated prompt engineering for the Judge as a classic ML problem using a Train/Test split.

- Split the Data: We took 100 manually reviewed samples and split them 50/50 into a Train/Test split using stratified random sampling. (Pro-tip: If you have enough data, always use a holdout/blind set for unbiased validation ).

- Engineer the Prompt: We iterated on the Judge's prompt to minimize evaluation errors on the Train set.

- Note on Automation: We attempted to use DSPy to automate this prompt optimization. However, the complexity of our logic checks required more nuance than the framework could handle at the time, so we fell back to manual iteration.

- Validate: We took our top 10 prompt iterations and ran them against the Test set. The winner was the prompt that kept accuracy high on both sets, proving we hadn't just overfitted the evaluator.

From Vague to Robust: Evolving the Prompt

You are an expert AI model evaluator. Your objective is to strictly assess an AI model's ability to follow complex, multi-step instructions that require sequential tool usage.

You will base your evaluation on the following components:

1. System/User Prompt: The original instructions provided to the AI.

2. Conversation History: The sequence of user requests, tool calls made by the AI, and the data returned by those tools. This history is your absolute source of truth.

3. Model Response: The final JSON output generated by the AI, which you are evaluating.

[BEGIN DATA]

************

[Prompt & Conversation History]:

{input}

************

[Model Response (Candidate for Evaluation)]:

{output}

************

[END DATA]

Evaluation Task & Criteria:

Please follow these steps to evaluate the response:

1. Analyze: Review the original prompt requirements. Cross-reference the Model Response against the Conversation History to verify factual accuracy and ensure all instructions were followed.

2. Score: Assign a score from 1 to 5 based on the following rubric:

- 5: Completely correct; flawless execution of instructions and accurate use of tool data.

- 4: Mostly correct; minor omissions or slight deviations that do not impact the overall utility.

- 3: Partially correct; failed to follow some instructions or slightly misinterpreted tool data.

- 2: Mostly incorrect; major errors, significant missing data, or severe formatting issues.

- 1: Entirely incorrect; complete failure to follow instructions or hallucinated data.

3. Critique: Draft a concise justification for your assigned score, citing specific examples from the data.

Output Format Requirements:

You MUST return ONLY a valid JSON object with exactly two keys: "score" (an integer) and "critique" (a string). Do not include markdown formatting (e.g., ```json), conversational filler, or any text outside of the JSON object.

At first glance, the prompt above looks robust. It has a rubric, strict output constraints, and a clear role. But, when deployed in a complex, multi-step agentic workflow of evaluating insurance claim decisions, it quickly reveals several critical flaws.

Here is a breakdown of why this approach is still too vague for enterprise-grade LLM evaluation:

- The "Garbage In, Garbage Out" Trap (Instruction Bias)

This prompt asks the Judge model to evaluate the output based strictly on the user's original instructions. But that introduces a major edge case: what if the user's instructions are inherently flawed, contradictory, or maliciously injected?

By anchoring the evaluation to the user's prompt rather than a verified, objective "Ground Truth" or system-level policy, the Judge is essentially forced to validate the model's obedience rather than its factual accuracy. If a user asks the model to execute an action that violates system policy, and the model complies, the Judge will cheerfully hand out a perfect 5/5 score.

- The "Fuzzy" Scoring Rubric

Even with definitions for scores 1 through 5, the boundaries between neighboring numbers remain dangerously subjective.

For an LLM, the line between a 3 (Partially Correct) and a 4 (Mostly Correct) fluctuates based on the context window, the specific phrasing of the output, or even just the model's "mood" on a given run. More importantly, a 1-5 scale rarely translates well to automated deployment pipelines. Where is the exact line for an acceptable output? Is a 4 good enough to serve to a customer? To build a truly deterministic system, it is often necessary to abandon the 1-5 scale entirely in favor of strict, binary Pass/Fail criteria for specific components. - Unbounded Evaluation

This is perhaps the most dangerous oversight. The prompt asks the Judge to evaluate the entire output in a single pass.

In an insurance claim workflow, the model often needs to check policy expiration dates, validate towing coverage, and assess cargo loss logic—all of which involve heavy conditional routing (for instance, towing rules should not be evaluated if it is a cargo-only loss). Forcing an LLM to judge this massive, multi-component JSON object all at once using raw data practically invites hallucinations.

Furthermore, Root Cause Analysis (RCA) becomes a nightmare. If the Judge outputs an overall score of "2", it requires processing of the text-based "critique" just to figure out which specific component of the system failed. - The Missing Examples (Zero-Shot Ambiguity)

Finally, the prompt relies entirely on "zero-shot" reasoning. It gives the Judge rules, but no concrete examples of what a perfect or terrible output actually looks like in the context of the data.

While a highly capable model might occasionally deduce the correct criteria on its own, relying on that is a massive gamble. When an evaluator's performance is lagging, the absence of "few-shot" prompting—providing one or two golden examples of correct vs. incorrect outputs—leaves the model constantly guessing at unwritten expectations.

A robust prompt:

## **Role & Objective**

You are an expert AI judge specializing in the adjudication of commercial truck insurance claims. Your primary objective is to rigorously evaluate the output of an AI Claims Adjuster by fact-checking its conclusions against a provided set of input data derived from various tool calls.

## **Reference Materials**

Your evaluation must be based solely on the provided input data. This data is categorized into two types, each sourced from specific tools:

- Claims Data: Information related to the specific loss event.

- Policy Data: Information related to the insurance contract.

## **Data for Evaluation**

You will be given the following two pieces of information:

- **[Input Data]**: The complete set of data returned from the tool calls listed above. This is your **source of truth**.

- **[AI Adjuster Output]**: The JSON output generated by the AI Claims Adjuster that you must evaluate.

[BEGIN DATA]

************

[Input Data]:

{input}

************

[AI Adjuster Output (Candidate for Evaluation)]:

{output}

************

[END DATA]

## **Evaluation Task & Criteria**:

Do not evaluate the response holistically. Instead, evaluate the output against these specific, independent components:

1. Policy Validity Check: Did the model correctly determine if the policy was active on the date of loss using the tool data? (Pass/Fail)

2. Loss Coverage Check: Did the model correctly apply loss coverage routing rules (e.g., third-party liability, cargo loss was correctly identified and its coverage status was accurately assessed based on the policy.)? (Pass/Fail)

3. Applicable deductibles check: Did the model correctly identify applicable deductibles to the covered loss? (Pass/Fail)

EXAMPLES OF EVALUATION:

Example 1 (Good Output):

The tool data showed the policy expired on 10/01, but the date of loss was 10/15. The model correctly denied the claim.

Result -> "Policy Validity Check": "Pass"

Example 2 (Bad Output):

The claimant requested Physical Damage loss for a cargo-only policy coverage. The model approved the loss coverage, violating the coverage rules in the System Policy.

Result -> "Loss Coverage Check": "Fail"

Output Format Requirements:

You MUST return ONLY a valid JSON object containing an array of evaluations. Do not include markdown formatting or conversational filler.

Required Schema:

{

"evaluations": [

{

"component_name": "Name of the check (e.g., Policy Validity Check)",

"status": "Pass or Fail",

"reasoning": "A 1-2 sentence explanation referencing specific Tool Data."

}

]

}

To build a reliable evaluation pipeline, the approach needs to shift. Instead of asking the LLM Judge, "How good is this out of 5?", the prompt must ask, "Did the model correctly execute these specific system rules based on the ground truth?"

Here is exactly why the robust prompt above solves the critical scaling issues identified earlier:

- Grounding in Truth (Eliminating Instruction Bias)

The biggest shift in this optimized prompt is the complete removal of the user's original instructions from the evaluation criteria. Instead, the prompt explicitly defines a strict Source of Truth: the Claims Data and Policy Data returned by tool calls.

By forcing the AI Judge to evaluate the Adjuster's output only against hard data and system policies, the "garbage in, garbage out" loop is broken. Even if a flawed user prompt leads to the Adjuster approving an invalid claim, the Judge will catch it because it is looking at the actual policy document, not the user's defective prompt. - Binary Logic Over "Vibes" (Fixing the Fuzzy Rubric)

Gone is the subjective 1-5 scale. In its place is a strict, binary Pass/Fail system.

This is a game-changer for automated testing. An LLM's interpretation of a "4 out of 5" might change from day to day, but "Pass/Fail" establishes a programmatic threshold. When an evaluation outputs a definitive "Fail," a CI/CD pipeline or routing script knows exactly how to handle it, like flagging the claim for human review. - Targeted Adjudication (Bounding the Evaluation)

Instead of boiling the ocean by asking the Judge to review the entire claim holistically, this prompt breaks the evaluation down into highly specific, independent components.

By forcing the Judge to look at one distinct variable at a time, the cognitive load on the model drops significantly, which drastically reduces hallucinations. More importantly, this makes Root Cause Analysis (RCA) trivial. - The Power of Examples (Solving Zero-Shot Ambiguity)

To stop the Judge from guessing what a good or bad evaluation looks like, this prompt uses "few-shot" prompting.

By providing concrete, domain-specific examples—like showing exactly how a date-of-loss discrepancy should result in a "Fail" for the Policy Validity Check—the prompt establishes a clear blueprint. The Judge no longer has to infer the unwritten rules of commercial truck insurance; the exact expectations are laid out in front of it. - Strict Schema Enforcement

Finally, the prompt demands a heavily constrained JSON output containing an array of evaluations. By explicitly banning markdown formatting and conversational filler, and by providing the exact key-value pairs required, the output is guaranteed to be machine-readable. This ensures that the evaluation data can be seamlessly parsed and logged by backend scripts without breaking the JSON parser.

Production Safeguard: The LLM-as-Jury

While "LLM-as-Judge" helps us optimize the system offline, we needed a real-time safeguard for production decisions. Relying on a single model for critical risk assessments is dangerous due to individual model biases.

To solve this, we implemented an LLM-as-Jury architecture.

How It Works

Instead of asking one model for a verdict, we query a panel (e.g., 3 models or the same model with different temperatures).

- The Voting Mechanism: We aggregate the decisions. For a decision to be valid, we require a consensus (e.g., 2 out of 3 jurors must agree). Consensus could be weighted depending on individual evaluator’s performance.

- Confidence Scoring: The consensus level translates directly into a confidence score. If 2 out of 3 jurors agree, we assign a 67% confidence score.

- Actionable Flags: High-confidence outputs are processed automatically. Low-confidence outputs (lack of consensus) are flagged for immediate manual review.

This "Jury" approach mitigates the risk of a single model hallucinating and smooths out the noise inherent in LLMs, providing a fairer and more reliable evaluation.

Guiding Principles for Robust Evals

If you are building your own LLM evaluation system, here are the core principles that guided our success:

- Avoid Instruction Bias: Give your Judge independent criteria. Do not just feed the evaluatee's prompt into the evaluator, or it will inherit the generator's blind spots.

- Rubrics over Scores: A binary "Pass/Fail" is better than a vague 1-5 score. It forces clear decision-making and cleaner data.

- The Power of Critique: Always ask the Judge for a detailed critique alongside the score. This captures why it failed and gives you great data for future few-shot examples.

- Randomization: Randomize the order of few-shot examples in your prompt to prevent position bias and overfitting.

- Scale Insight, Don't Replace It: Humans have to learn the domain first; the LLM is just there to scale that learning.

- Solve "Whack-a-Mole" with Regression Testing: This is non-negotiable. Never fix an error in isolation. When you adjust a prompt, you must re-run your evaluation against the entire Regression Dataset to ensure you didn't break something else.

Final Thoughts

Taking an LLM from a cool demo to a production-grade system isn't about writing the perfect prompt; it's about building a rigorous, scalable evaluation engine. By leaving the "vibe checks" behind, we successfully turned a risky experiment into a trusted core component of Nirvana's insurance infrastructure.

Are you building your own LLM-as-Judge systems or fighting the "Whack-a-Mole" trap? We’d love to hear how you’re solving the ground truth “cold-start” problem. If you want to help us revolutionize the legacy-heavy commercial trucking insurance industry using data and technology, check out our open engineering roles!