As our engineering team at Nirvana scaled past 100 developers, merging a single PR started taking over an hour. Not because CI was slow, but because it kept getting invalidated due to continuous merges on main. Developers would rebase, rerun CI, and wait… only to find that another merge had already broken their build. The cycle would repeat multiple times before a PR could land. To fix this, we built NirvanaMQ, a speculative stateless merge queue that eliminates CI invalidation and enables near-instant merges at scale, handling 7,000+ PRs a year without a database, reducing average merge time from 60 minutes to under 5 minutes.

TL;DR

As Nirvana’s engineering team scaled past 100 developers, the standard rebase-CI-merge cycle became a productivity bottleneck. PRs kept getting invalidated by concurrent merges to main, forcing repeated CI reruns.

To solve this, the team built NirvanaMQ, a custom speculative, stateless merge queue:

- Speculative checks run CI on each queued PR as if all PRs ahead of it will pass, enabling parallel validation and effectively O(1) merge time within the speculative window (vs. O(N) for a sequential queue).

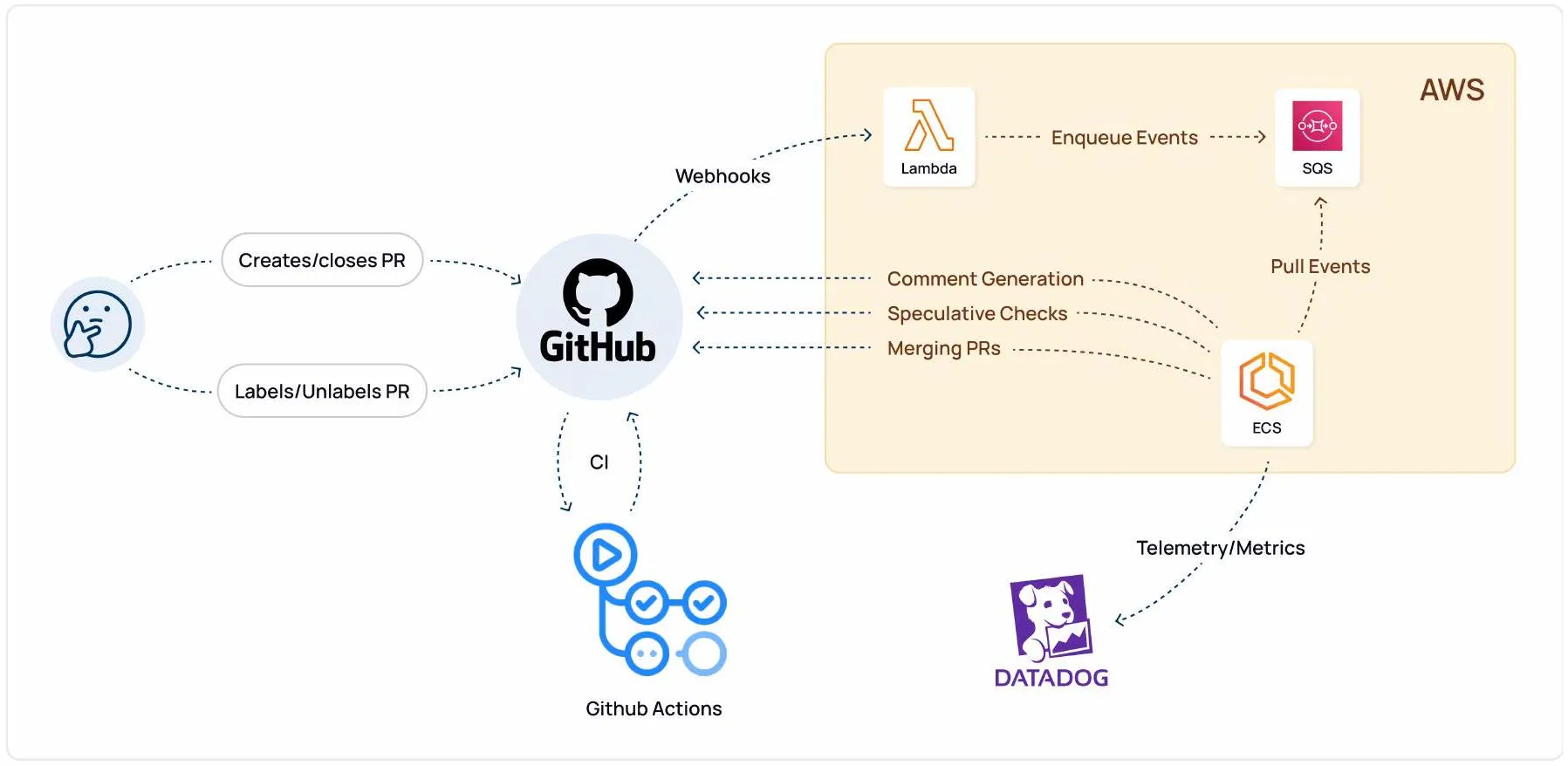

- Event-driven: Reacts to GitHub webhooks (buffered via Lambda + SQS, processed on ECS), with periodic polling as a safety net for delayed/missing webhooks.

- Stateless by design: No database. GitHub itself (labels, branch naming conventions) is the source of truth, enabling crash recovery by reconstructing queue state on startup.

- Key features over GitHub’s native MQ: skip-ahead for urgent PRs, unlimited queue size, granular notifications, timeline view, and dedicated observability via Datadog, all at negligible infra cost.

It started as a 10-week internship project, now merges 7,000+ PRs/year, and is a core piece of Nirvana’s engineering infrastructure.

The Merge Bottleneck

The safe way to merge a PR is straightforward: rebase on latest main, re-run CI, then merge. The catch is that this doesn’t scale. As our team grew, developers kept getting stuck in a loop ⇒ they’d rebase, kick off CI on the latest main, and by the time checks finished, someone else had already merged to main, invalidating their CI run.

This resulted in a significant loss of developer productivity and a waste of CI resources. We were at an inflection point. The process that was meant to ensure quality was now actively hindering it.

What is a Merge Queue?

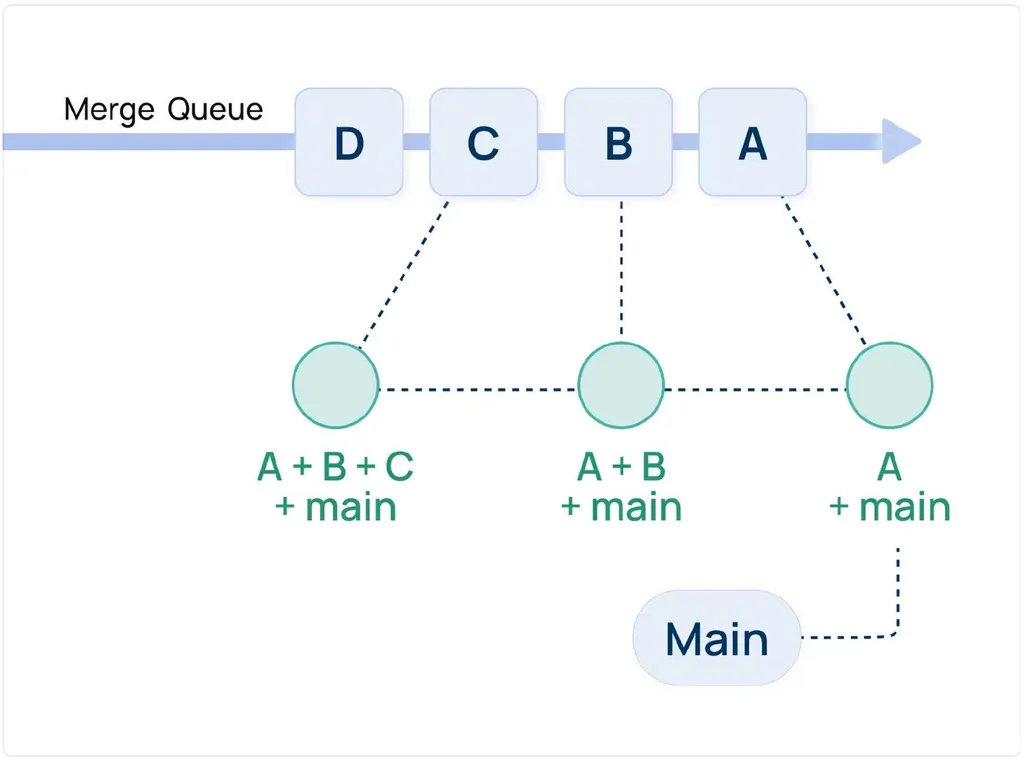

A merge queue automates the rebase → CI → merge cycle. PRs enter the queue, and the system ensures each one is tested against the latest main before being merged, keeping the branch stable while improving merge velocity. The simplest implementation is sequential. When a PR is added, the system creates a temporary commit (let’s call it <X+main>) by applying the PR’s changes on top of the latest main, then runs the full CI suite. If the checks pass, the PR is merged, and the process repeats for the next PR.

This guarantees a stable main, but it introduces a new bottleneck. For a queue of N PRs, you’re looking at O(N) total merge time. The developer at the back of the line could be waiting a while.

Quest for Velocity

How could we speed up the process without sacrificing safety? We explored two primary architectural patterns.

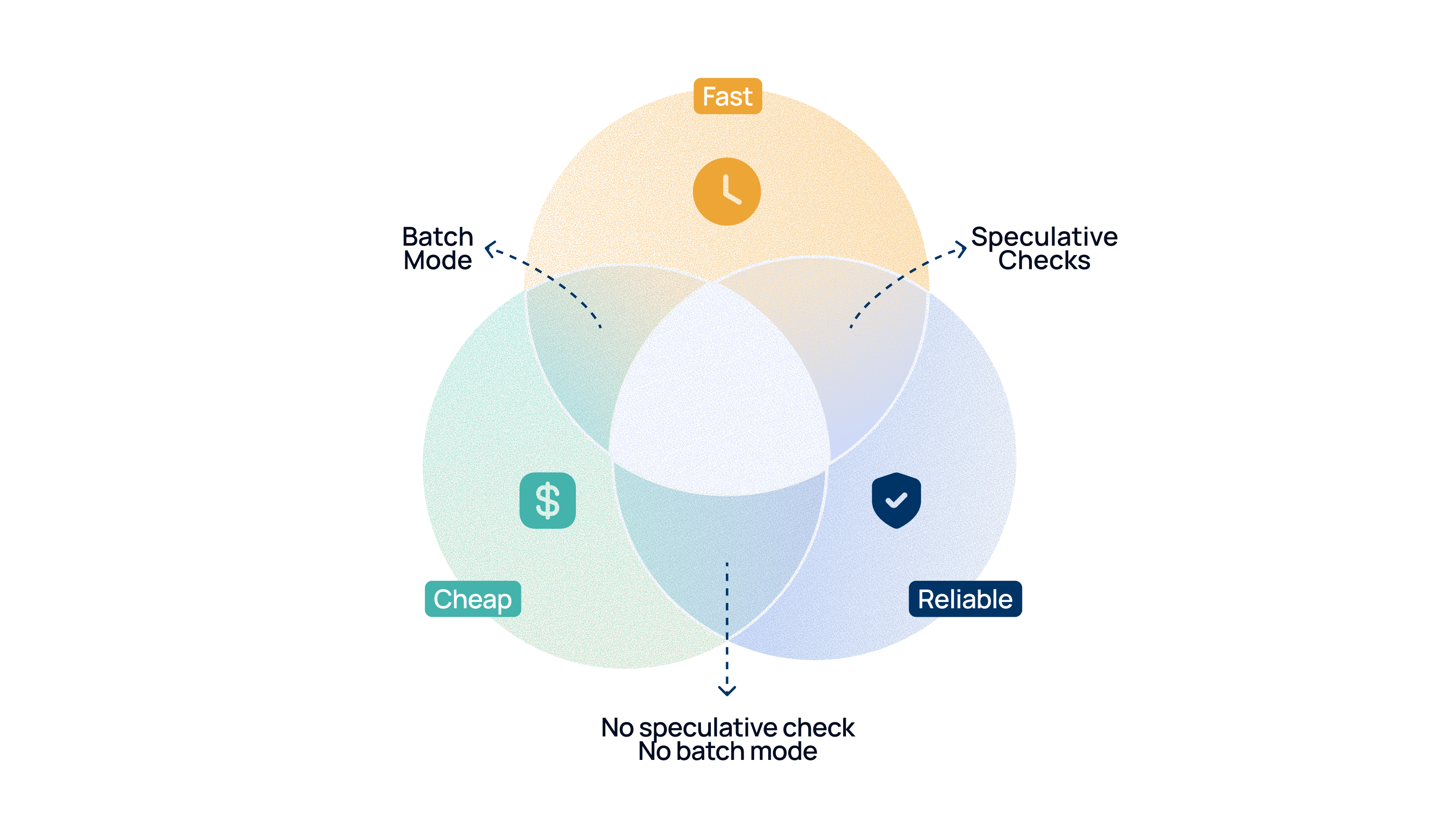

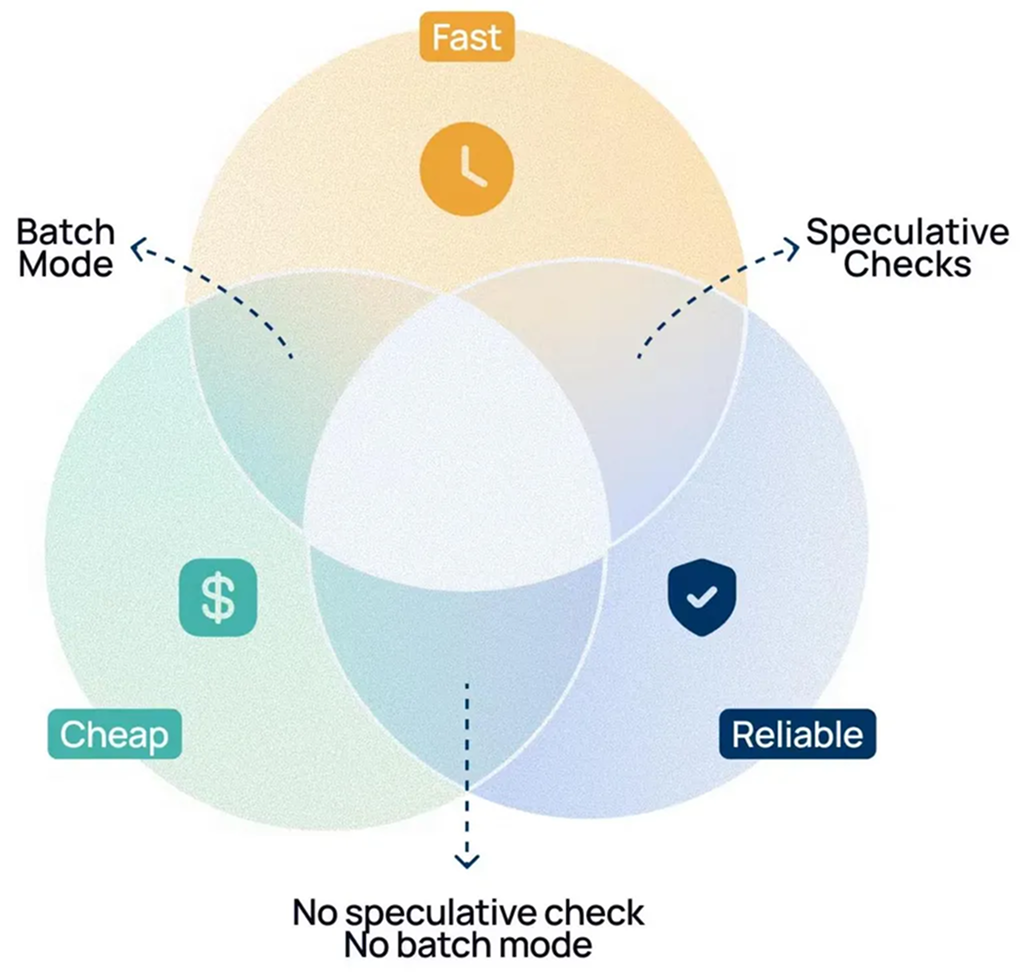

Approach 1: Batch Merges

One idea is to create a single, large commit that “batches” several PRs together (e.g. A+B+C+main) and run checks on that one commit.

Advantage: This is cheap and fast. Multiple PRs can be merged simultaneously using a reduced number of CI checks.

Disadvantage: Brittle. If the batch fails, you can’t tell which PR broke it. The fix is to reject the entire batch, which punishes healthy PRs for one bad one.

Approach 2: Speculative Checks (Our Chosen Path)

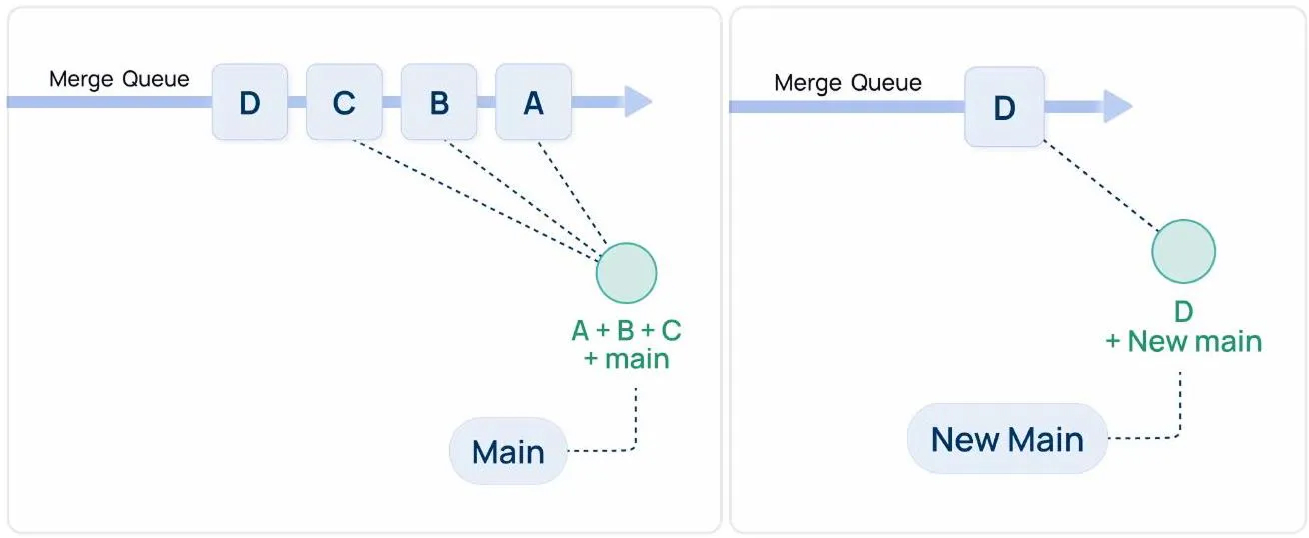

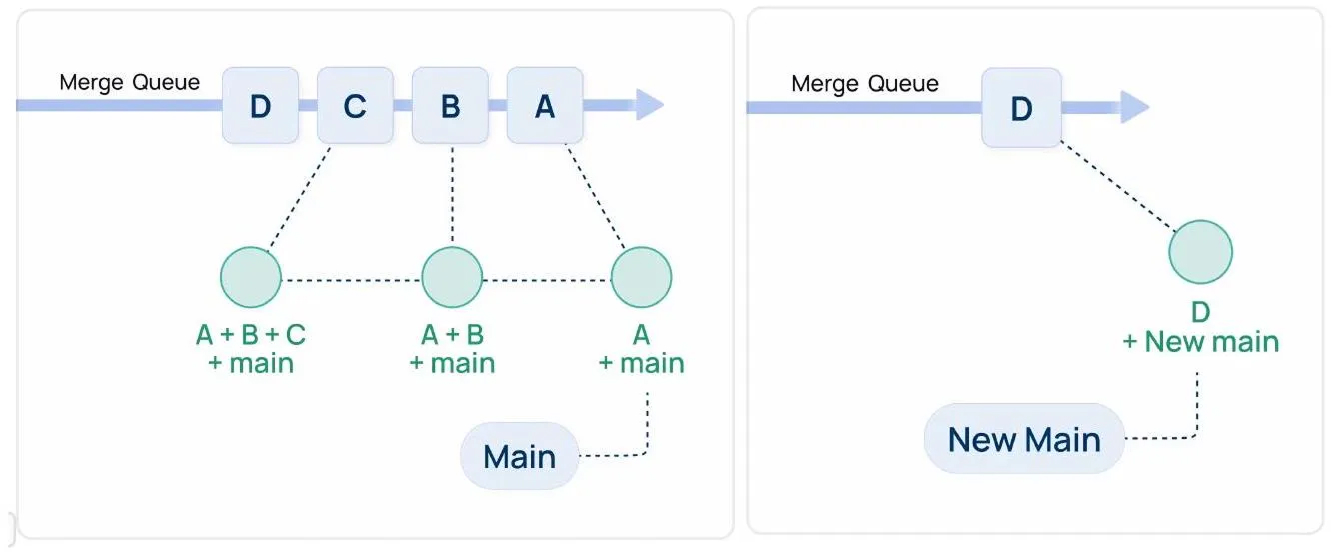

A more elegant solution is to use Speculative Checks. The idea is to spawn multiple CI checks in parallel, with each PR optimistically assuming that the PRs ahead in the queue will merge successfully.

For a queue with PRs A, B, and C, the system would run these checks simultaneously:

- On a commit representing

A + main - On a commit representing

A + B + main - On a commit representing

A + B + C + main

Advantage: When A’s checks pass, it merges immediately. B’s checks were already running on top of A, so B can merge right after, no new CI run needed. Time complexity drops to effectively O(1) within the speculative window.

Disadvantage: If A fails, the speculative runs for B and C are now invalid and need to restart. Another example: If B fails, then only B is removed and checks for C restart on top of A. Some CI waste, but we only eject the failing PR, not the whole batch.

Given our needs, Speculative Checking was the right way to go. We decided the velocity gain and reliability were worth the occasional wasted CI runs.

The “Build vs. Buy” Decision

Our expectations with a merge queue solution were:

- Auto Merge capability

- Skip Ahead: Helps in merging urgent PRs by moving them to the front

- Speculative Checks: Increase velocity of merges

- Observability Infrastructure

With these principles in mind, we evaluated some of the existing market solutions:

- Mergify: Powerful, state of the art, but pricing didn’t work for us.

- GitHub MQ: Has speculative checks, but is expensive and missing features we wanted (urgent merges, priority queues).

- Bors: Open-source, but no speculative checks.

After a thorough analysis, the path became clear. Building our own solution would be more cost-effective and would give us the freedom to build the exact features we needed.

Nirvana Fun Fact: We actually used GitHub MQ until August 2023 to validate the UX while simultaneously building our own. NirvanaMQ started as an internship project, built in under 10 weeks!

Under the Hood of NirvanaMQ

Building our own merge queue was a formidable challenge that required us to design a complete system, from event handling to the core merge algorithm.

An Event-Driven Architecture

NirvanaMQ doesn’t poll GitHub; rather, it reacts to GitHub’s events. A developer adds a merge_ready label to their PR, and the merge queue system starts tracking its events.

Our repo requires: CI passing, at least one approval, and all comments resolved. Once a PR is labeled with merge_ready, NirvanaMQ listens for relevant GitHub Webhooks:

- PR events: open/close, label changes, new commits pushed, ready for review

- Check suite events: CI status changes

- Review events: approvals, change requests

- Review thread events: comments resolved/unresolved

- Push events to main: admin force-merges outside of NirvanaMQ

Under the hood, we created a GitHub Bot with the necessary permissions and specific webhook requirements. We also needed the bot to perform some more repo-based actions (like merging PRs, etc.), which we will discuss further.

The Core Algorithm

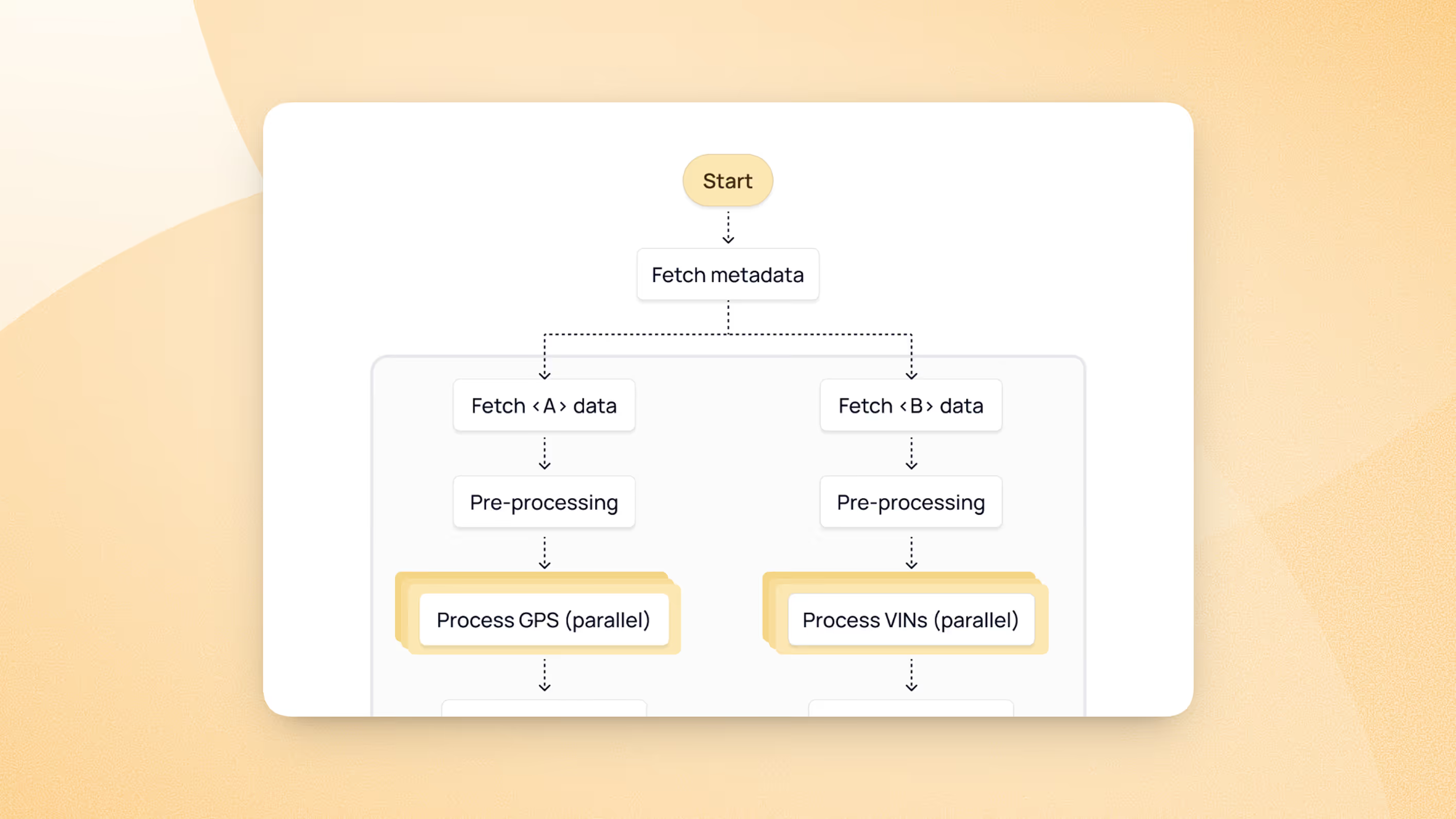

Anatomy of a Speculative Check

We first need to break down what goes into a single speculative test run. Each queue element is a carefully constructed combination of two components:

- The PR: This is the original pull request submitted by the developer.

- The Speculative PR: A draft PR containing the original PR’s code, while optimistically assuming that PRs ahead get merged. This is what the speculative CI actually runs on. For example, in the figure below, the speculative PR for B is on commit A+B+main

The Four Golden Rules

The core of NirvanaMQ is this simple but relentless ReconcileQueue process. It ensures that the following golden rules are always met, hence keeping the queue clean, consistent, and always moving forward.

- Rule 1: PRs must actually be ready. Approvals in place, no unresolved comments, CI passing on the original PR. For instance, if someone revokes approval while a PR is in the queue, ReconcileQueue ejects it.

- Rule 2: Front of the line gets priority. The first PR’s speculative checks must always be running. The instant they pass, ReconcileQueue merges it.

- Rule 3: Failures get removed immediately. If a PR’s speculative check fails, it’s removed from the queue.

- Rule 4: Speculation Chain must always be valid. If a PR is removed from the queue, ReconcileQueue ensures that speculative checks are restarted on the subsequent PRs.

The Core Components

NirvanaMQ is more than just an algorithm; it’s a complete system with several components apart from the actual queue. Let’s discuss some of them:

Git State Management

NirvanaMQ maintains its own local clone of the repository. This is essential for performing Git operations like creating new branches for speculative checks and pushing them to GitHub, deleting outdated speculative branches, and many other reasons.

GitHub API Wrapper

We built a wrapper around the GitHub API to perform actions like creating and closing the draft PRs used for speculative checks, merging the actual PRs, posting comments on PRs to keep developers informed, and many other use cases.

The Infrastructure

The system runs on AWS and comprises three core components:

- Event Capture (AWS Lambda): Receives GitHub webhooks, verifies integrity, passes them along to AWS SQS.

- Event Buffer (AWS SQS): Buffers webhook payloads so we can handle traffic spikes and don’t lose events during service failures.

- Event Processing (AWS ECS): The core NirvanaMQ service. Consumes events from SQS, runs the algorithm, orchestrates Git and GitHub API operations.

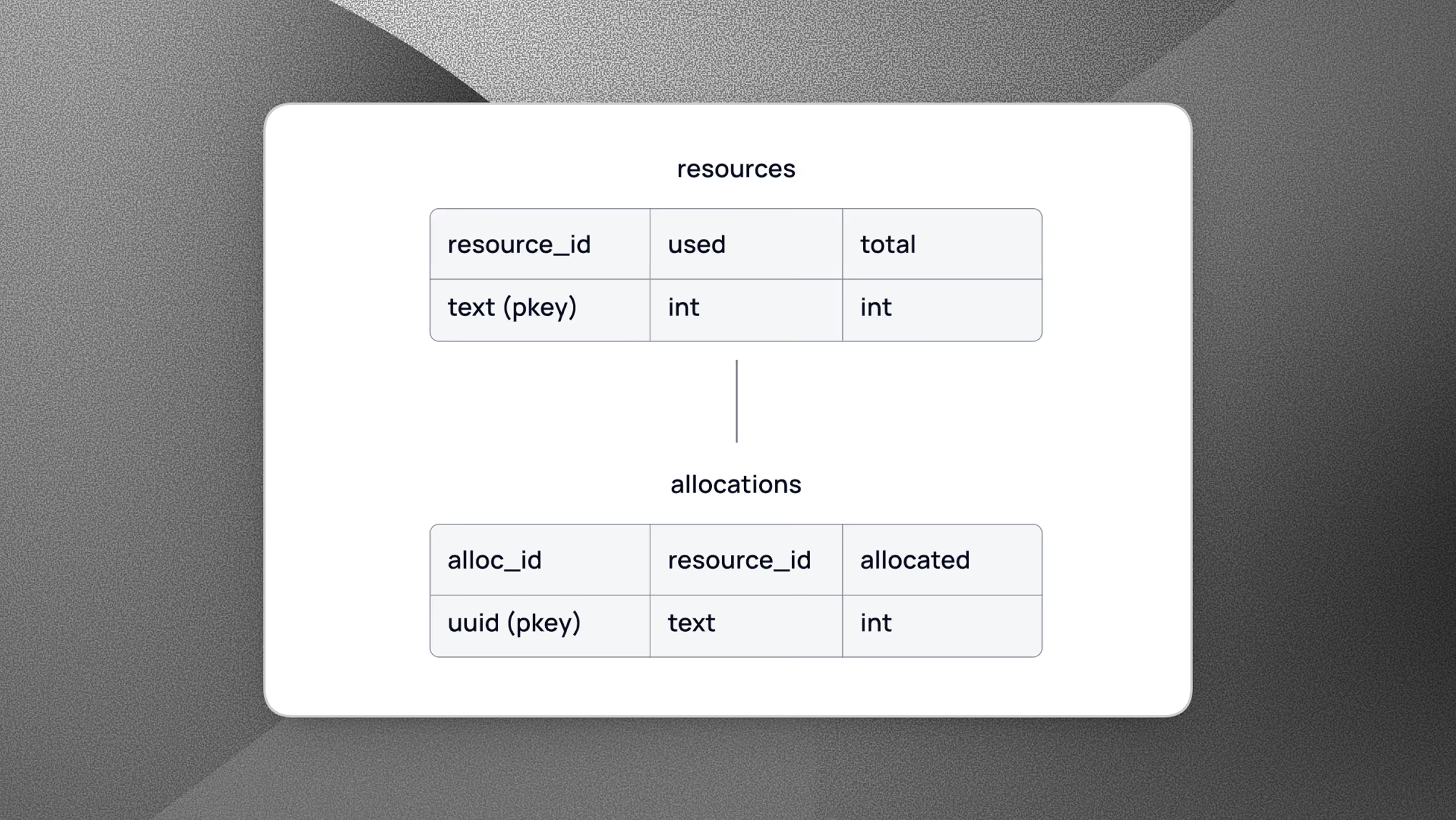

What happens if NirvanaMQ crashes?

A service that acts as the gatekeeper for your main branch must be incredibly reliable. This begs the question: what happens if the NirvanaMQ service itself crashes or needs to be restarted? For many systems, the answer would be a database that persists the state of the queue. However, we made a deliberate choice to avoid the complexity and operational overhead of a database.

Instead, we treat GitHub as the source of truth. The full queue state is encoded in GitHub itself, in PR labels and in the branch naming conventions of our bot’s draft PRs. This allows for a stateless service that can recover its full operational state at any time.

The Recovery Process: A Step-by-Step Reconstruction

When the NirvanaMQ service starts up, it immediately initiates a recovery sequence. Here’s how it works:

- Inventory: Fetch all open PRs from the repo.

- Classify: Separate tracked user PRs (those with merge_ready label) from bot-created speculative draft PRs.

- Decode: Parse the speculative PRs’ branch names. These encode which user PR they test along with other necessary metadata.

- Reconstruct: Piece together the queue order from the decoded branch names.

- Clean up: Delete orphaned bot PRs. Post comments on affected user PRs letting developers know their PR was removed due to a restart and may need re-queuing.

- Resume: Run ReconcileQueue to restore all invariants and kick off fresh speculative checks as needed.

This stateless, self-healing recovery mechanism makes NirvanaMQ exceptionally robust. By cleverly using GitHub as our database, we get the resilience we need without the operational burden, keeping our system both powerful and lightweight.

Bug Fixes and Improvements Over Time

- Webhook size limits: SQS has a 256KB message limit, and some webhooks exceeded it. We added GZIP compression in Lambda before forwarding to SQS. Failed webhooks get dumped to S3 for analysis.

- Don’t fully trust webhooks: GitHub occasionally sends delayed webhooks, which caused PRs to get stuck. We added periodic API polling to cross-check PR status, so we’re not solely dependent on webhook timeliness.

- Paginate your API calls: Embarrassing but worth sharing, we initially forgot to paginate some crucial GitHub APIs (the intern who built NirvanaMQ is now the author of this blog)

- Health checks and auto-restart: Due to the non-idempotent nature of some GitHub APIs, in case of GitHub API errors, NirvanaMQ sometimes reaches an unresolvable inconsistent state, resulting in errors being thrown and PRs getting stuck. Rather than manual intervention or putting effort into making each API wrapper idempotent, we added AWS health checks that auto-restart the service, letting crash recovery handle the rest.

- Blobless cloning: A full clone of our monorepo in NirvanaMQ takes ~10 minutes. That’s a brutal startup time for a service that needs to recover quickly. Switching to blobless cloning brought it down to seconds.

The Future of NirvanaMQ

No software is ever truly finished. While NirvanaMQ has already transformed our development process, we have many ideas for its future:

- Dashboard / CLI: NirvanaMQ already exposes API endpoints for health, queue status, adding/removing PRs, skip-ahead, etc. We plan to build either a dashboard or a CLI on top.

- Stacked PR support: Merging chains of dependent PRs.

- Priority classes: Currently NirvanaMQ only supports jumping urgent PRs to the front, but in the future we can build multiple priority classes.

- Fixed draft PR pool: Instead of creating and closing a new draft PR for each speculative check, maintain a fixed pool matching the speculative degree.

Wrapping Up: More Than Just a Tool

NirvanaMQ has transformed our merge process from a source of anxiety into a seamless, automated workflow. It has drastically increased our merge throughput, guaranteed the health of our main branch, and most importantly, freed our engineers to focus on what truly matters: building and shipping incredible products. NirvanaMQ merged ~7k PRs in 2025, and the number is only increasing as the team grows. Here’s a quick comparison of capabilities between NirvanaMQ and GitHub’s Merge queue:

Originally started as an internship project, it is now a core piece of our engineering infrastructure. If your team is hitting similar scaling pains, we hope this writeup gives you a useful starting point, whether you build your own or pick an off-the-shelf solution.

Come Build With Us

NirvanaMQ is just one example of the kind of problems we solve at Nirvana. From developer tooling and infrastructure to core product engineering and AI, we’re a team that takes ownership, moves fast, and isn’t afraid to build from scratch when the situation calls for it.

If working on challenges like these excites you, we’d love to hear from you. Check out our open roles at nirvanatech.com/careers!